Opus 4.6 & Agent Teams: Anthropic Just Changed the Game (Again)

Anthropic just dropped two massive updates that are sending shockwaves through the tech world. If you’ve been following the AI arms race, you know the players, but the leaderboard just got a serious shake-up.

Watch full video here:

<iframe width="560" height="315" src="https://www.youtube.com/embed/OIrdcmDZCRQ" title="Opus 4.6 + Agent Teams Explained No Hype- 5 mins" frameborder="0" allowfullscreen></iframe>In this guide we are breaking down why Opus 4.6 and the new Agent Teams feature aren't just incremental upgrades, they represent a fundamental shift in how we’ll work with AI.

1. Opus 4.6: The New King of Benchmarks

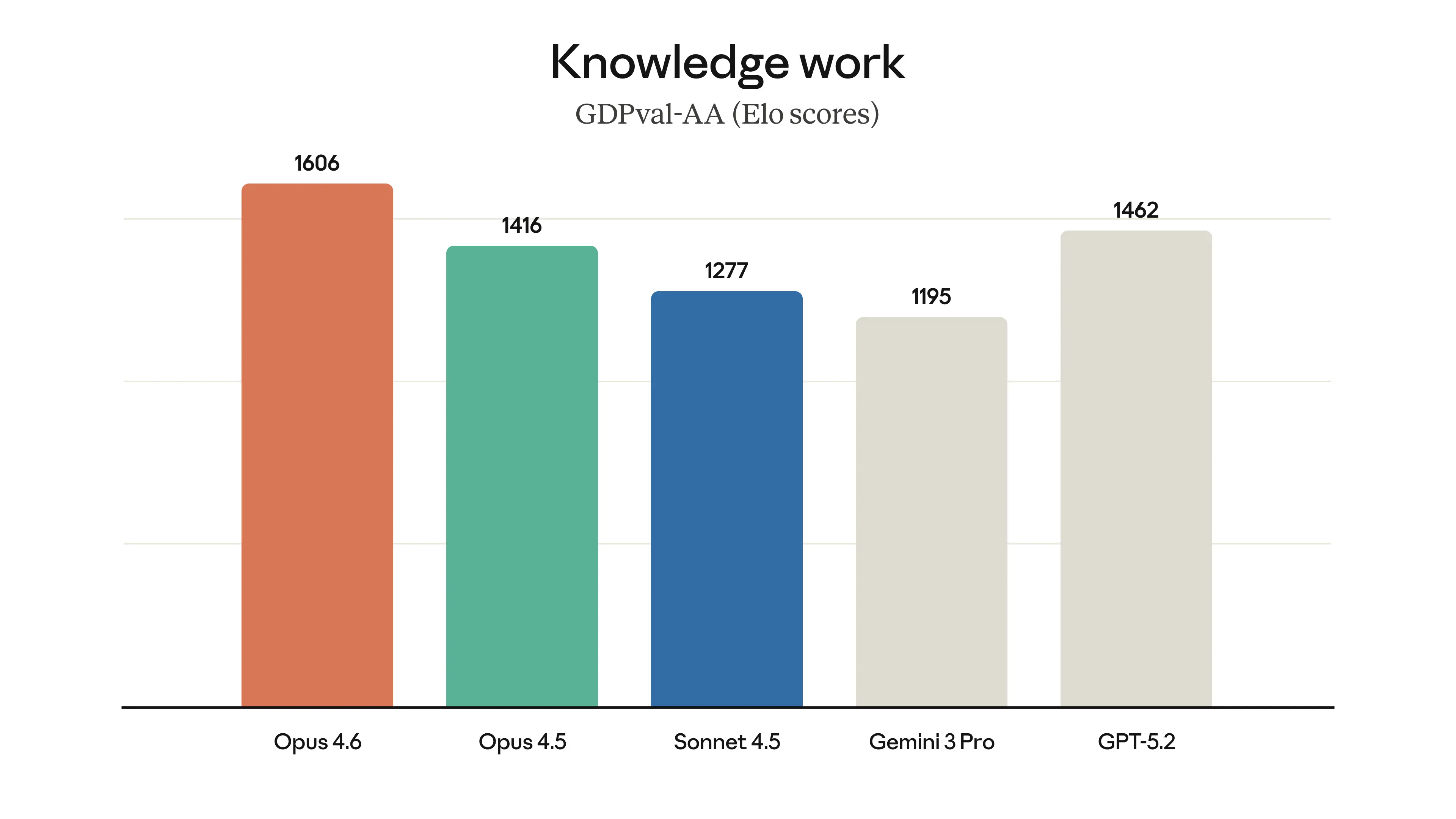

We’ve been waiting for a model to truly challenge the heavy hitters, and Opus 4.6 just did it. It’s now outperforming GPT-5.2 and Gemini 3 Pro on the benchmarks that actually reflect real-world work.

- Real-World Knowledge: In tasks involving finance, legal research, and complex data, Opus 4.6 scored 1600, significantly gapping its closest competitors.

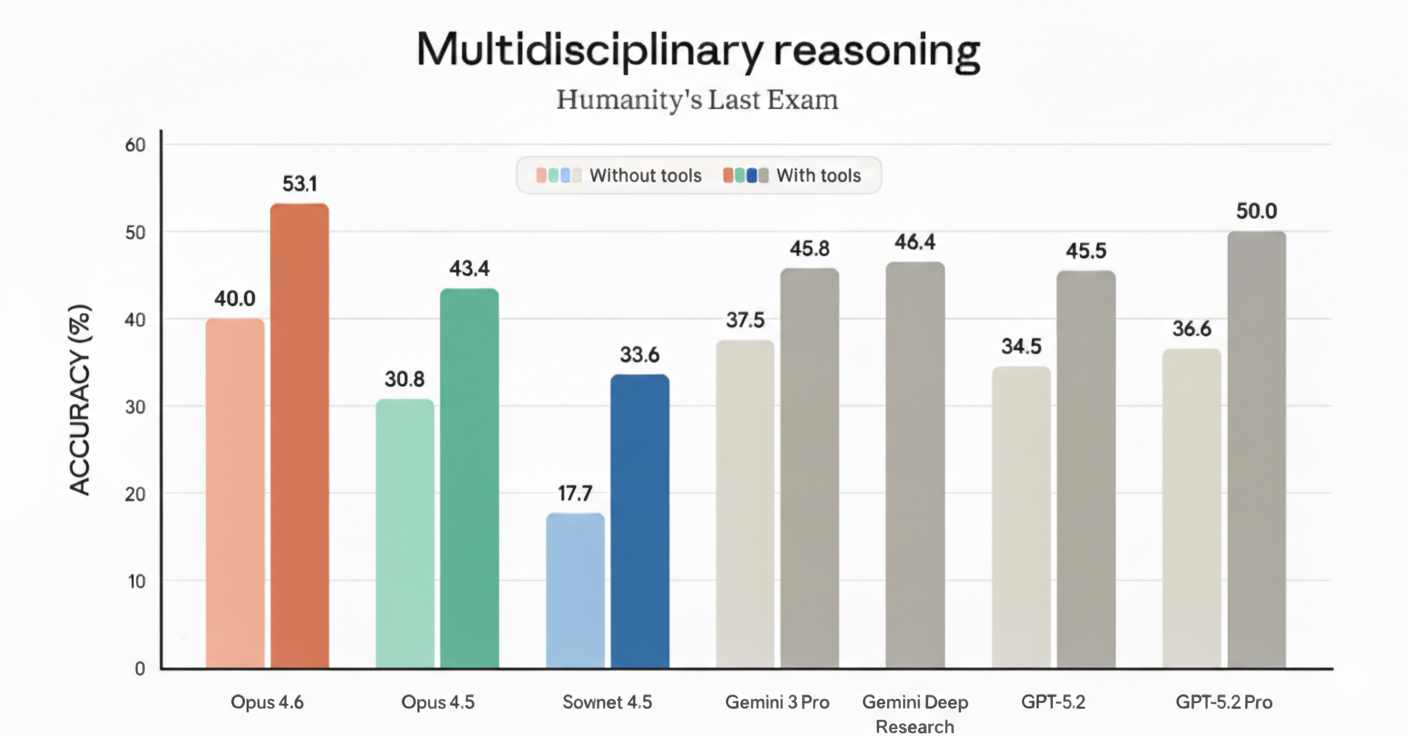

- Reasoning: On "Humanity's Last Exam" (the toughest reasoning test currently available), it hit 53% with tools, edging out GPT-5.2’s 50%.

- The Massive Context Leap: Perhaps the biggest "hidden" win is the 1 million token context window. While the previous model struggled to recall info at high volumes (scoring 18% on recall tests), Opus 4.6 scores a staggering 93%.

What this means for you: You can feed it entire codebases, massive legal files, or month-long project histories, and it won't "forget" the details halfway through.

2. From Chatbot to Department: Introducing "Agent Teams"

This is the update that actually changes the nature of work. Up until now, you talked to one AI, gave it one task, and it gave you one answer. Agent Teams flips the script.

Now, within Claude Code, you can tell Claude to create a team. It spawns multiple "sub-Claudes" that function like a real-world office:

- The Manager: One agent breaks down the project and assigns tasks.

- The Teammates: Other agents execute specific pieces (research, coding, financials).

- True Collaboration: They don't just report back to you; they talk to each other. They can challenge findings, share notes, and catch errors that a single agent working in a vacuum would miss.

3. Why Non-Coders Should Pay Attention

Right now, Agent Teams is in Claude Code (the developer tool). But there is a pattern here: Anthropic usually tests features with devs first, then rolls them out to CoWork (the interface for everyone else) within weeks.

When this hits CoWork, a non-technical manager could spin up a team of agents to:

- Analyze a Competitor: One agent scrapes socials, one digs into SEC filings, and one reviews their product docs.

- Cross-Reference Data: They compare notes to find discrepancies—like a marketing agent claiming growth while the financial agent finds pending legislation that could kill the business.

This isn't a chatbot anymore; it's a department.

4. The Downsides

As someone who builds AI automations for a living, I have to give you the "no-hype" reality check:

- It’s Expensive: Every agent in a team is a separate session burning through tokens. If you let a team run wild on a massive project, your bill will reflect it.

- Experimental Friction: Currently, it’s off by default. You have to enable it in settings. If your session disconnects, you can’t pick up where you left off yet.

- OS Limits: The cool "split-screen" view where you can watch the agents talk in real-time currently only works in T-Max (not yet optimized for Windows or standard VS Code).

5. Should You Use It?

If you have access to Claude Code, turn it on today. Test it on a real project and see the difference in "thought quality" when two agents push back on each other’s logic. We are moving away from "AI as a tool" and toward "AI as a workforce." Whether you’re a developer or a business owner, the goal is no longer just "prompting" it’s management.

Need help building your own AI workforce? Book a strategy call with our agency

Want to discuss this?

Book a call→